AI Factories: The New Backbone of Enterprise AI Infrastructure

Every enterprise pursuing artificial intelligence at scale eventually hits the same wall. The pilot works. The proof of concept impresses the board. Then the team tries to move it into production – and the infrastructure buckles. Storage bottlenecks choke data pipelines, GPUs sit idle waiting for congested networks, and security becomes an afterthought bolted onto a fragile stack. The problem is not ambition. It is architecture.

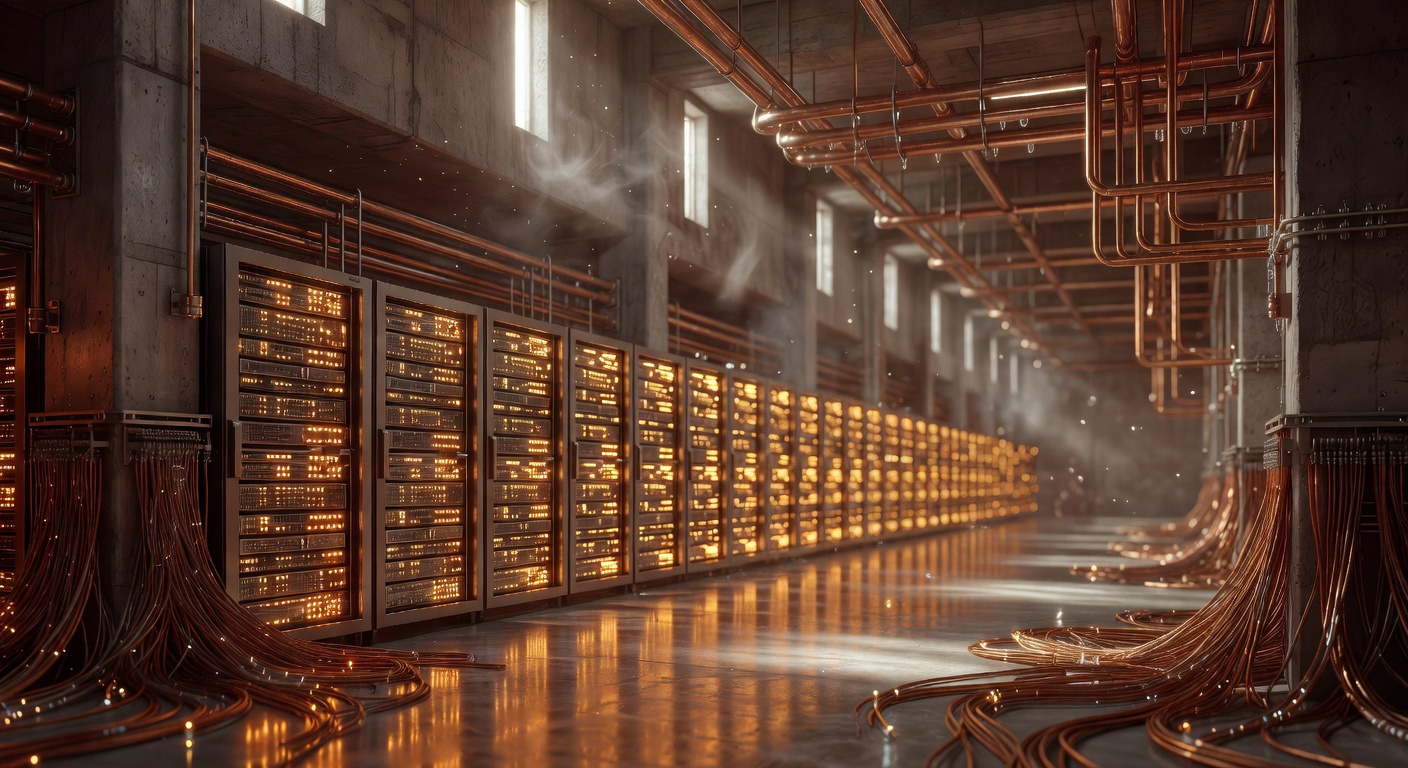

This is the gap that AI factories are designed to close. Unlike traditional data centers optimized for general-purpose CPU workloads, AI factories are integrated, purpose-built infrastructure systems engineered to produce intelligence – in the form of tokens from large language models – at enterprise scale. They unify compute, networking, storage, security, and orchestration into modular platforms that support continuous training, fine-tuning, inference, and data pipelines. The result is AI that stops being a scarce resource rationed to a handful of high-profile projects and becomes a shared capability any team can build on.

The shift is already underway. Major vendors are delivering turnkey and validated designs, enterprises are deploying hundreds of GPUs in hybrid configurations, and a new class of GPU-dense facilities is emerging that operates at 130 to 240 kW per rack – a staggering leap from the 2 to 15 kW per rack that legacy data centers were built to handle. Here is how the AI factory model works, who is building them, and what it takes to get one right.

Why Traditional Data Centers Cannot Keep Up

The core issue is a mismatch between exponential AI demand and linear infrastructure capacity. Data size and model complexity have grown at rates that far outpace the roughly two-year doubling cycle of CPU processing power described by Moore’s Law. General-purpose computing has reached its practical limits for AI workloads, creating a tipping point in favor of accelerated computing platforms built around GPUs and specialized accelerators.

Legacy data centers were designed for server workloads running at 2 to 15 kW per rack. AI workloads demand a fundamentally different approach to power, cooling, networking, and even metallurgy. Training and inference generate massive east-west traffic between GPU servers and north-south traffic between clients, storage, and compute. These patterns require lossless, congestion-free networking – and when they do not get it, the consequences are immediate. During high-demand phases like model training or retrieval-augmented generation, network congestion causes job stalls where expensive GPU resources sit idle waiting for data. That drives up cost per token and extends project timelines.

The real bottleneck, as Lambda’s VP of Data Center Infrastructure has framed it, is not GPU scarcity in the hardware sense. It is the shortage of data centers actually built for AI-scale workloads. Enterprises need facilities that function as mini-supercomputers, and most existing infrastructure simply was not designed for that.

What an AI Factory Actually Looks Like

An AI factory integrates every stage of AI production into a single coordinated system. Rather than grafting a dedicated AI cluster onto an existing data center, it fully merges data ingestion, training, fine-tuning, and inference with the compute, networking, and storage layers required to run them efficiently.

| Layer | Core Function | Key Components |

|---|---|---|

| Compute (GPU/HPC) | Raw processing for training and inference | GPU/TPU accelerators, HPC clusters, parallel storage, advanced cooling |

| Data Ingestion and Pipelines | Data gathering, cleaning, integration | Feature engineering, governance controls, quality pipelines |

| Model Development | Hypothesis validation and optimization | Experimentation platforms, A/B testing, production-scale evaluation |

| Networking | Lossless data movement between GPUs, storage, and clients | Silicon One switches, BlueField DPUs, Spectrum-X networking |

The output of this system is tokens – the fundamental units of intelligence produced by large language models. As NVIDIA’s VP of DGX Systems has described it, customers want one type of infrastructure that can produce the tokens they need today while remaining ready for the models of tomorrow. The factory must be flexible and adaptable, because new reasoning models, data models, and architectures emerge constantly.

Power, Cooling, and the Physics of Scale

The physical demands of AI factories represent a generational shift in facility engineering. Next-generation GPU-dense facilities operate at 130 to 240 kW per rack, enabled by advances in cooling technology, fluid dynamics, and modular design. Lambda’s approach treats data center components as composable elements – power, water, and air – that can be assembled and reconfigured as hardware evolves.

A smaller enterprise deployment might consist of three racks of GB300 GPUs alongside networking and storage racks. Larger multi-density centers mix high-power AI racks with legacy infrastructure. In both cases, the mechanical, electrical, and plumbing systems must be planned early and in close coordination – retrofitting is expensive and slow. Building these systems from scratch can take years, which is why many enterprises are pursuing staged, hybrid approaches that modernize incrementally.

Vendor Ecosystems and Validated Designs

The AI factory market has matured rapidly. Several major vendors now offer turnkey or validated solutions that collapse deployment timelines:

- NVIDIA Enterprise Reference Architectures power AI Factory Validated Designs built on Blackwell HGX B200 systems, RTX PRO Servers, Spectrum-X networking, and Run:ai for GPU orchestration. NVIDIA’s own internal AI factory – supporting hundreds of AI workflows – validated this approach before it was offered externally.

- Cisco Secure AI Factory with NVIDIA integrates compute, Silicon One switches, BlueField DPUs, storage, security, and observability into prevalidated designs that extend existing Ethernet environments.

- Lambda offers public cloud GPU leasing (hours to a year) and private cloud contracts ranging from 64 to tens of thousands of GPUs, with modular facilities designed for rapid deployment.

- ASUS deploys ESC8000 servers and AI PODs with NVIDIA GB200/GB300 NVL72 for centralized compute, plus the ASUS Infrastructure Deployment Center for automated OS and firmware rollout across 1,000+ nodes.

- WEKA provides scalable storage through its NeuralMesh platform, delivering sub-millisecond latencies and tens of terabytes per second throughput for unified AI data pipelines.

The common thread is validated, repeatable architecture. NVIDIA’s internal deployment revealed that early planning for networking and storage, close alignment between hardware and software releases, and consistent platforms were essential to turning experimental setups into enterprise-grade systems.

Deployment Models: Cloud, On-Prem, Hybrid, and Edge

Not every enterprise needs the same AI factory configuration. The right model depends on workload characteristics, regulatory requirements, and cost tolerance.

| Model | Best For | Key Trade-offs |

|---|---|---|

| Cloud AI | Variable workloads, limited internal expertise | Data residency risks; costs exceed on-prem at sustained high utilization |

| On-Premise | Regulated sectors (finance, healthcare, defense) | Full data sovereignty; higher upfront investment |

| Hybrid (recommended for most) | Burst training in cloud, low-latency inference on-prem | Flexibility for 80%+ of enterprises; requires multi-cloud orchestration |

| Edge AI | Real-time applications (manufacturing, autonomous systems) | Prioritizes inference latency over model size |

A hybrid approach – combining on-premises GPU infrastructure with cloud bursting for peak demand – suits the majority of organizations. SES AI, a battery manufacturer, demonstrated this effectively by partnering with Crusoe Cloud to deploy two SLURM clusters: Cluster A with 128 H100 GPUs (16 nodes of 8 GPUs each) and Cluster B with A100 GPUs (8 nodes of 8 each), plus 50 TB of shared storage and 10 TB NFS per cluster. This setup supported 10 to 15 data scientists running LLMs and material simulations, completing a 12-month research project with optimized GPU utilization and minimal disruptions – all without requiring deep DevOps expertise from the research team.

Scaling with Virtual Clusters and Dynamic GPU Provisioning

For enterprises managing hundreds of GPUs across teams, the orchestration layer is critical. Traditional Kubernetes lacks native GPU autoscaling, leading to either overprovisioning (expensive) or queuing (slow). The emerging best practice combines virtual clusters with dynamic node provisioning to achieve multi-tenant isolation and cost control.

The workflow is straightforward. A data scientist submits a job requiring, say, 16 H100 GPUs for training. The autoscaler detects pending pods, checks available providers – DGX on-prem, AWS p5 instances, Azure ND-series, bare metal via MAAS – and provisions two DGX nodes (8 GPUs each) directly into the requesting team’s virtual cluster. When the job completes, the nodes deprovision and return to the shared pool.

This approach targets 65 to 75 percent GPU utilization and can reduce costs by 60 to 70 percent compared to static allocation. A recommended hybrid balance splits node pools across 50 percent on-prem DGX, 30 percent AWS p5, and 20 percent Azure ND. Scheduling off-peak data transfers – during windows like 2 to 6 AM UTC – can cut transfer fees by an additional 30 percent.

Common Pitfalls to Avoid

- Overprovisioning GPUs: Without dynamic autoscaling, idle GPU capacity at $60,000 per GPU per year becomes a critical business issue.

- Data silos: Isolated databases prevent models from accessing the full breadth of enterprise data. Consolidate into unified data lakes early.

- Single-cloud lock-in: Relying on one provider can waste 30 percent of budget on data transfers. Provision across three or more providers with dynamic selection.

- Weak tenant isolation: Standard Kubernetes namespaces provide only soft isolation. Use virtual cluster private nodes for production workloads.

- Neglecting governance: Ungoverned workflows fail at scale. Enforce per-tenant role-based access controls from day one using Infrastructure as Code tools like Terraform and Ansible.

The Counterargument: When AI Factories Are the Wrong Shape

Not everyone is convinced that AI factories are the right default. A credible contrarian view holds that most enterprise AI workloads are fragmented, distributed, closely tied to operational systems, and incremental – not the clean, large-scale training jobs that centralized GPU clusters are optimized for. For 70 to 80 percent of enterprise workloads that do not require peak GPU demand, a CPU-first strategy may offer better proximity to existing systems, more predictable costs, and greater operational simplicity.

This critique has merit. AI factories built around supply – “we have compute, let’s find ways to use it” – can introduce friction by forcing data to move, workloads to conform, and costs to become opaque. The most effective enterprise strategies start with the work: where the data lives, where decisions are made, what latency constraints exist, and what can be deployed incrementally. For many use cases, that points to distributed inference close to the edge rather than a monolithic factory.

The practical takeaway is that AI factories make the most sense for organizations with genuine high-compute needs – large-scale training, complex fine-tuning, heavy inference serving – while lighter workloads may be better served by more distributed architectures.

Real-World Results and Efficiency Gains

NVIDIA’s own internal AI factory offers the most comprehensive case study. Software engineering agents completed over 18 years’ worth of development work in just over a year. Hardware design agents completed over 16 years’ worth of engineering work in the same period, supporting more than 100 workflows and answering over 1 million engineering queries. Supply chain agents reduced daily customer allocation planning from three hours to 10 minutes, and weekly inventory planning from 15 hours to one hour.

On the infrastructure efficiency side, deploying intelligent load balancing on BlueField DPUs has demonstrated 40 percent higher token throughput, 61 percent faster time-to-first-token, 34 percent lower latency, and 80 percent less CPU usage compared to traditional load balancers – which consume roughly 12 CPU cores versus 2 for DPU-based solutions. These are not marginal gains. In an AI factory where every GPU millisecond has a dollar value, this kind of efficiency directly impacts cost per token and overall ROI.

Key Takeaways

AI factories represent a fundamental shift from project-centric AI experiments to platform-centric infrastructure designed for shared, scalable capabilities. They unify compute, data pipelines, model development, networking, and security into integrated systems that produce intelligence at industrial scale. The physical demands are significant – 130 to 240 kW per rack, lossless networking, advanced cooling – but validated reference architectures from major vendors have dramatically reduced deployment complexity.

The most successful implementations start small (as few as three GPU racks), scale modularly, and adopt hybrid models that blend on-premises infrastructure with cloud bursting. Dynamic GPU provisioning through virtual clusters targets 65 to 75 percent utilization while cutting costs by 60 to 70 percent. And critically, organizations should resist the temptation to over-apply the factory model – reserving GPU-dense infrastructure for workloads that genuinely require it while serving lighter use cases through more distributed approaches.

The AI factory is not a universal solution. But for enterprises serious about moving AI from pilot to production at scale, it is rapidly becoming the essential foundation.

Sources

- Scaling AI Demands a New Infrastructure Playbook

- Building AI Factories for the Enterprise – NVIDIA

- AI Factories: Redefining the Enterprise Playbook

- NVIDIA AI Factory Drives Enterprise Innovation

- AI Factories and Enterprise Acceleration – WEKA

- Scalable AI Infrastructure for SES AI – DeepSense

- Scaling AI Factory Infrastructure – vCluster

- AI Factories Are the Wrong Shape – CTO Advisor

- 5-Step Guide to Scalable AI Infrastructure – DDN

- AI Factories Need Intelligent Infrastructure – F5

- 5 Steps to Scale AI for Enterprise Success